This is the multi-page printable view of this section. Click here to print.

Docs

- 1: Chall-Manager

- 1.1: Ops Guides

- 1.1.1: Deployment

- 1.1.2: Monitoring

- 1.2: ChallMaker Guides

- 1.2.1: Create a Scenario

- 1.2.2: Software Development Kit

- 1.2.3: Update in production

- 1.2.4: Use the flag variation engine

- 1.3: Developer Guides

- 1.3.1: Entensions

- 1.3.2: Integrate with a CTF platform

- 1.4: Design

- 1.4.1: Context

- 1.4.2: The Need

- 1.4.3: Genericity

- 1.4.4: Architecture

- 1.4.5: High Availability

- 1.4.6: Hot Update

- 1.4.7: Security

- 1.4.8: Expiration

- 1.4.9: Software Development Kit

- 1.4.10: Testing

- 1.5: Tutorials

- 1.5.1: A complete example

- 1.6: Security

- 1.7: Glossary

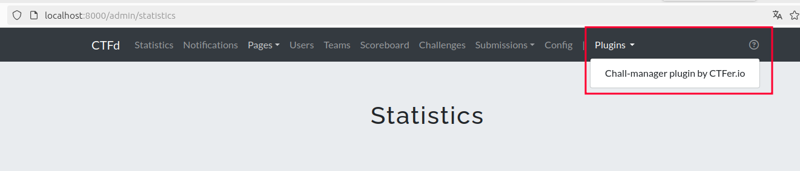

- 2: CTFd-chall-manager

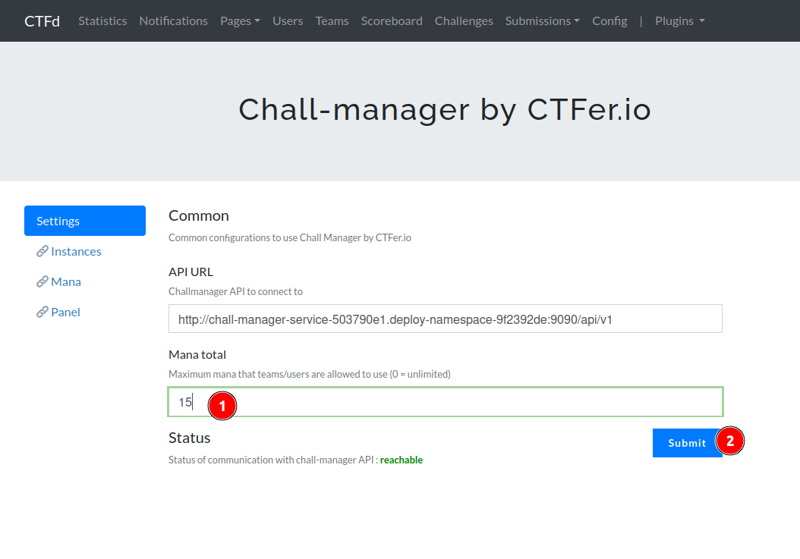

- 2.1: Get started

- 2.1.1: Setup

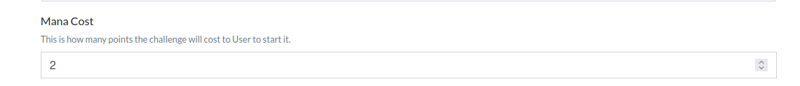

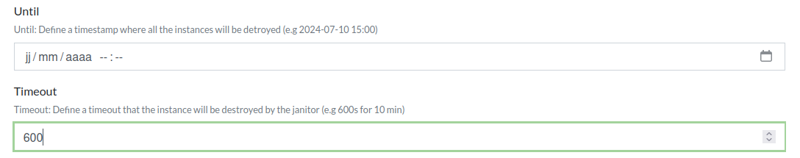

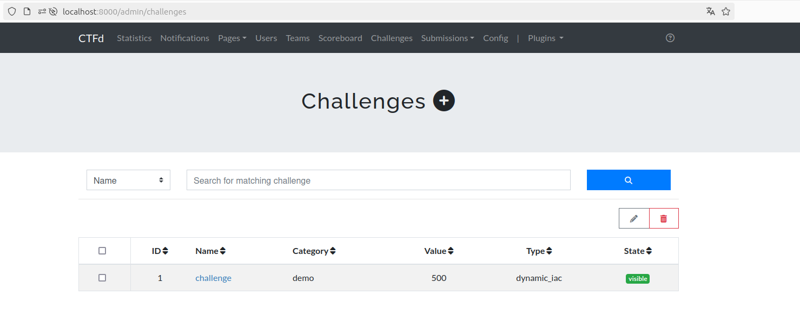

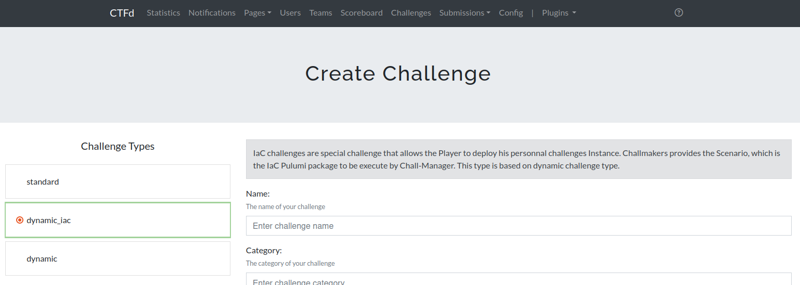

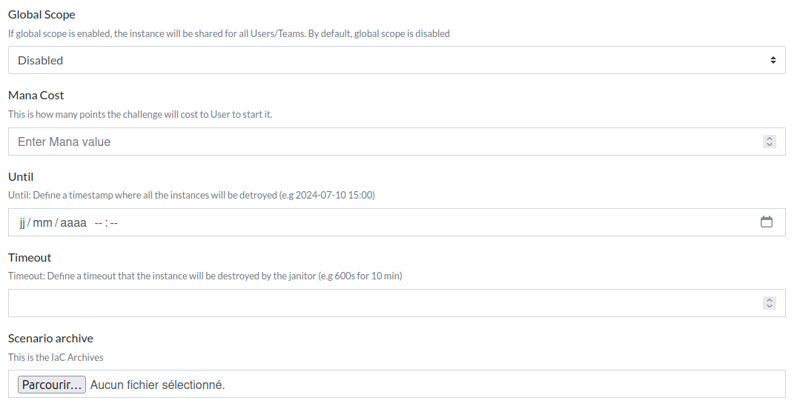

- 2.1.2: Create a challenge

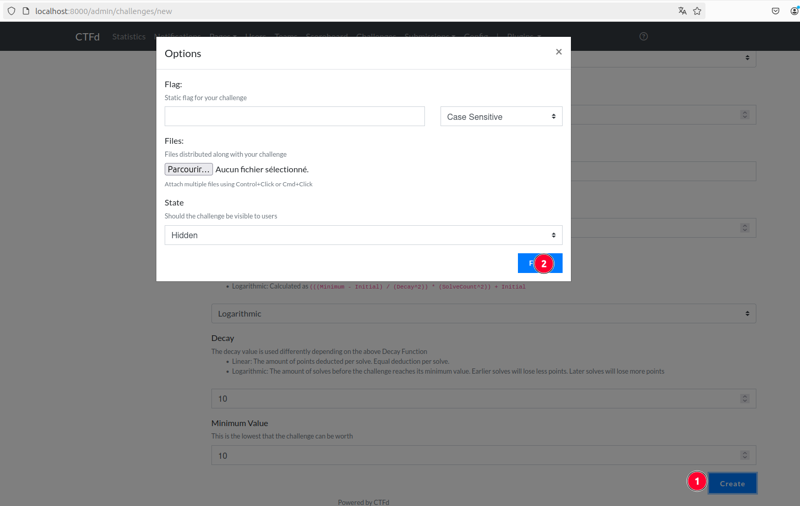

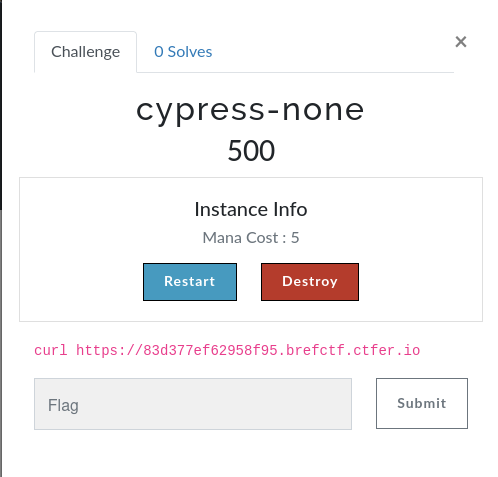

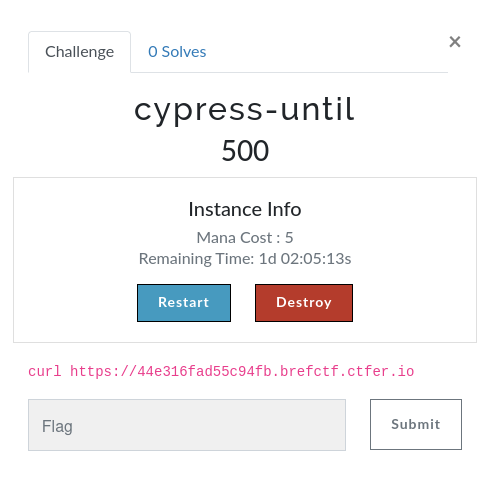

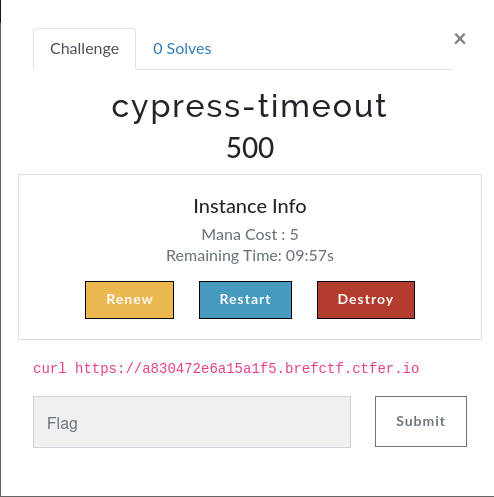

- 2.1.3: Play the challenge

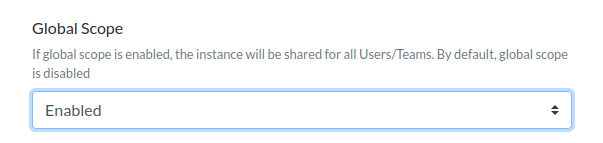

- 2.2: Guides

- 2.3: Design

1 - Chall-Manager

Warning

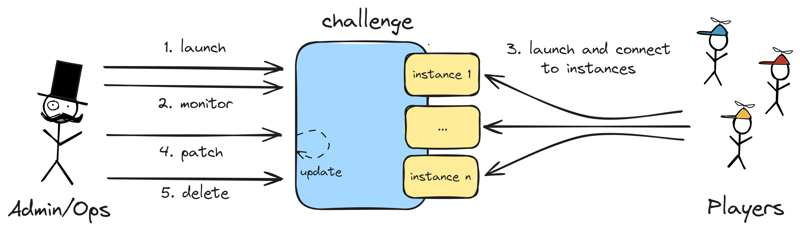

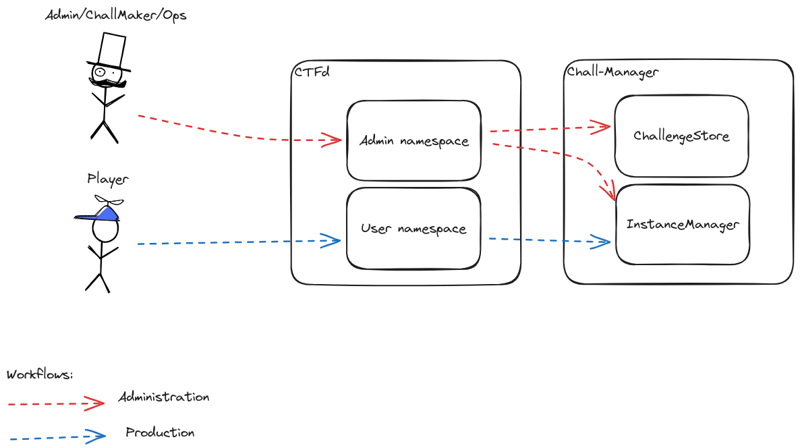

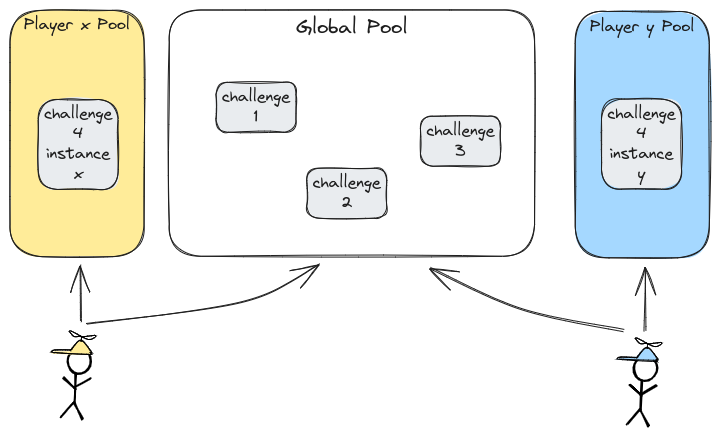

Currently entering private alpha phase, for any issue: ctfer-io@protonmail.comChall-Manager is a MicroService whose goal is to manage challenges and their instances. Each of those instances are self-operated by the source (either team or user) which rebalance the concerns to empowers them.

It eases the job of Administrators, Operators, Chall-Makers and Players through various features, such as hot infrastructure update, non-vendor-specific API, telemetry and scalability.

Thanks to a generic Scenario concept, the Chall-Manager could be operated anywhere (e.g. a Kubernetes cluster, AWS, GCP, microVM, on-premise infrastructures) and run everything (e.g. containers, VMs, IoT, FPGA, custom resources).

1.1 - Ops Guides

1.1.1 - Deployment

You can deploy the Chall-Manager in many ways. The following table summarize the properties of each one.

| Name | Maintained | Isolation | Scalable | Janitor |

|---|---|---|---|---|

| Kubernetes (with Pulumi) | ✅ | ✅ | ✅ | ✅ |

| Kubernetes | ❌ | ✅ | ✅ | ✅ |

| Docker | ❌ | ✅ | ✅¹ | ❌² |

| Binary | ❌ | ❌ | ❌ | ❌² |

¹ Autoscaling is possible with an hypervisor (e.g. Docker Swarm).

² Cron could be configured through a cron on the host machine.

Kubernetes (with Pulumi)

Note

We highly recommend the use of this deployment strategy.

We use it to test the chall-manager, and will ease parallel deployments.

This deployment strategy guarantee you a valid infrastructure regarding our functionalities and security guidelines. Moreover, if you are afraid of Pulumi you’ll have trouble creating scenarios, so it’s a good place to start !

The requirements are:

- a distributed block storage solution such as Longhorn, if you want replicas.

- an etcd cluster, if you want to scale.

- an OpenTelemetry Collector, if you want telemetry data.

- an origin namespace in which the chall-manager will run.

# Get the repository and its own Pulumi factory

git clone git@github.com:ctfer-io/chall-manager.git

cd chall-manager/deploy

# Use it straightly !

# Don't forget to configure your stack if necessary.

# Refer to Pulumi's doc if necessary.

pulumi up

Now, you’re done !

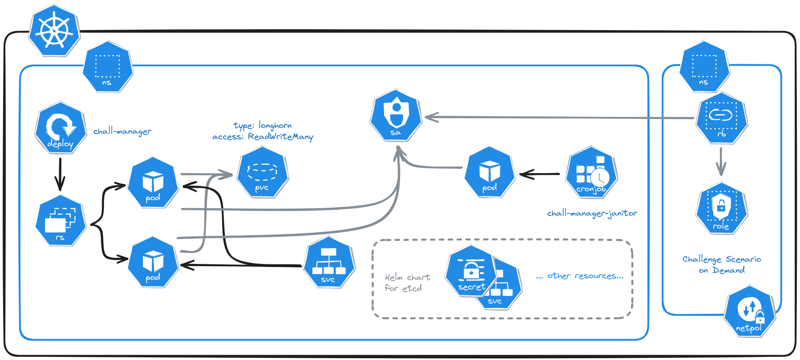

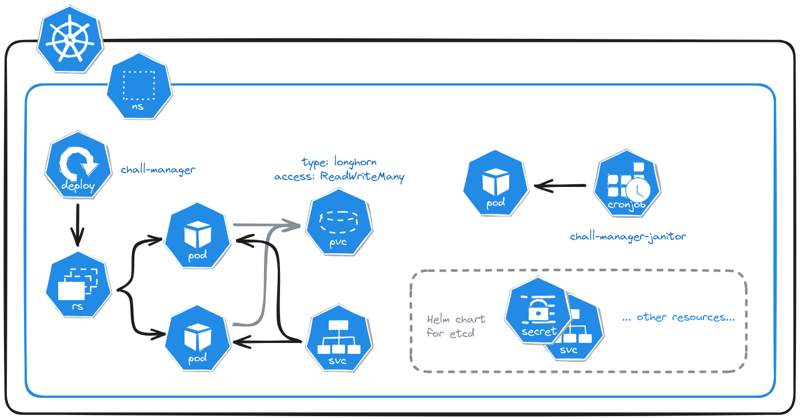

Micro Services Architecture of chall-manager deployed in a Kubernetes cluster.

Kubernetes

With this deployment strategy, you are embracing the hard path of setting up a chall-manager to production. You’ll have to handle the functionalities, the security, and you won’t implement variability easily. We still highly recommend you deploying with Pulumi, but if you love YAMLs, here is the doc.

The requirements are:

- a distributed block storage solution such as Longhorn, if you want replicas.

- an etcd cluster, if you want to scale.

- an OpenTelemetry Collector, if you want telemetry data.

- an origin namespace in which the chall-manager will run.

We’ll deploy the following:

- a target Namespace to deploy instances into.

- a ServiceAccount for chall-manager to deploy instances in the target namespace.

- a Role and its RoleBinding to assign permissions required for the ServiceAccount to deploy resources. Please do not give non-namespaced permissions as it would enable a CTF player to pivot into the cluster thus break isolation.

- a set of 4 NetworkPolicies to ensure security by default in the target namespace.

- a PersistentVolumeClaim to replicate the Chall-Manager filesystem data among the replicas.

- a Deployment for the Chall-Manager pods.

- a Service to expose those Chall-Manager pods, required for the janitor and the CTF platform communications.

- a CronJob for the Chall-Manager-Janitor.

First of all, we are working on the target namespace the chall-manager will deploy challenge instances to. Those steps are mandatory in order to obtain a secure and robust deployment, without players being able to pivot in your Kubernetes cluster, thus to your applications (databases, monitoring, etc.).

The first step is to create the target namespace.

target-namespace.yaml

apiVersion: v1

kind: Namespace

metadata:

name: target-ns

To deploy challenge instances into this target namespace, we are going to need 3 resources: the ServiceAccount, the Role and its RoleBinding. This ServiceAccount should not be shared with other applications, and we are here detailing 1 way to build its permissions. As a Kubernetes administrator, you can modify those steps to aggregate roles, create a Cluster-wide Role and RoleBindng, etc. Nevertheless, we trust our documented approach to be wiser for maintenance and accessibility.

Adjust the role permissions to your needs. You can do this using kubectl api-resources –-namespaced=true –o wide.

role.yaml

apiVersion: rbac.authorieation.k8s.io/v1

kind: Role

metadata:

name: chall-manager-role

namespace: target-ns

labels:

app: chall-manager

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- persistentvolumeclaims

- pods

- resourcequotas

- secrets

- service

verbs:

- create

- delete

- get

- list

- patch

- update

- watch

- apiGroups:

- apps

resources:

- deployments

- replicasets

- statefulsets

verbs:

- create

- delete

- get

- list

- patch

- update

- watch

- apiGroups:

- batch

resources:

- cronjobs

- jobs

verbs:

- create

- delete

- get

- list

- patch

- update

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

- networkpolicies

verbs:

- create

- delete

- get

- list

- patch

- update

- watch

The the ServiceAccount it will refer to.

service-account.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: chall-manager-sa

metadata: source-ns

labels:

app: chall-manager

Finally, bind the Role and ServiceAccount.

role-binding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: chall-manager-role-binding

namespace: target-ns

labels:

app: chall-manager

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: chall-manager-role

subjects:

- kind: ServiceAccount

name: chall-manager-sa

namespace: source-ns

Now, we will prepare isolation of scenarios to avoid pivoting in the infrastructure.

First, we start by denying all networking.

netpol-deny-all.yaml

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: netpol-deny-all

namespace: target-ns

labels:

app: chall-manager

spec:

podSelector: {}

policyTypes:

- Ingress

- Egress

Then, make sure that intra-cluster communications are not allowed from this namespace to any other.

netpol-inter-ns.yaml

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: netpol-inter-ns

namespace: target-ns

labels:

app: chall-manager

spec:

egress:

- to:

- namespaceSelector:

matchExpressions:

- key: kubernetes.io/metadata.name

operator: NotIn

values:

- target-ns

podSelector: {}

policyTypes:

- Egress

For complex scenarios that require multiple pods, we need to be able to resolve intra-cluster DNS entries.

netpol-dns.yaml

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: netpol-dns

namespace: target-ns

labels:

app: chall-manager

spec:

egress:

- ports:

- port: 53

protocol: UDP

- port: 53

protocol: TCP

to:

- namespaceSelector:

matchLabels:

kubernetes.io/metadata.name: kube-system

podSelector:

matchLabels:

k8s-app: kube-dns

podSelector: {}

policyTypes:

- Egress

Ultimately our challenges will probably need to access internet, or our players will operate online, so we need to grant access to internet addresses.

netpol-internet.yaml

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: netpol-internet

namespace: target-ns

labels:

app: chall-manager

spec:

egress:

- to:

- ipBlock:

cidr: 0.0.0.0/0

expect:

- 10.0.0.0/8

- 172.16.0.0/12

- 192.168.0.0/16

podSelector: {}

policyTypes:

- Egress

At this step, no communication will be accepted by the target namespace. Every scenario will need to define its own NetworkPolicies regarding its inter-pods and exposed services communications.

Before starting the chall-manager, we need to create the PersistentVolumeClaim to write the data to.

pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: chall-manager-pvc

namespace: source-ns

labels:

app: chall-manager

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 2Gi # arbitrary, you may need more or less

storageClassName: longhorn # or anything else compatible

volumeName: chall-manager-pvc

We’ll now deploy the chall-manager and provide it the ServiceAccount we created before.

For additionnal configuration elements, refer to the CLI documentation (chall-manager -h).

deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: chall-manager-deploy

namespace: source-ns

labels:

app: chall-manager

spec:

replicas: 1 # scale if necessary

selector:

matchLabels:

app: chall-manager

template:

metadata:

namespace: source-ns

labels:

app: chall-manager

spec:

containers:

- name: chall-manager

image: ctferio/chall-manager:v1.0.0

env:

- name: PORT

value: "8080"

- name: DIR

value: /etc/chall-manager

- name: LOCK_KIND

value: local # or "etcd" if you have an etcd cluster

- name: KUBERNETES_TARGET_NAMESPACE

value: target-ns

ports:

- name: grpc

containerPort: 8080

protocol: TCP

volumeMounts:

- name: dir

mountPath: /etc/chall-manager

serviceAccount: chall-manager-sa

# if you have an etcd cluster, we recommend creating an InitContainer to wait for the cluster to be up and running before starting chall-manager, else it will fail to handle requests

volumes:

- name: dir

persistentVolumeClaim:

claimName: chall-manager-pvc

We need to expose the pods to integrate chall-manager with a CTF platform, and to enable the janitor to run.

service.yaml

apiVersion: v1

kind: Service

metadata:

name: chall-manager-svc

namespace: source-ns

labels:

app: chall-manager

spec:

ports:

- name: grpc

port: 8080

targePort: 8080

protocol: TCP

# if you are using the chall-manager gateway (its REST API), don't forget to add an entry here

selector:

app: chall-manager

Now, to enable the janitoring, we have to create the CronJob for the chall-manager-janitor.

cronjob.yaml

apiVersion: batch/v1

kind: CronJob

metadata:

name: chall-manager-janitor

namespace: source-ns

labels:

app: chall-manager

spec:

schedule: "*/1 * * * *" # run every minute ; configure it elseway if necessary

jobTemplate:

spec:

template:

metadata:

namespace: source-ns

labels:

app: chall-manager

spec:

containers:

- name: chall-manager-janitor

image: ctferio/chall-manager-janitor:v1.0.0

env:

- name: URL

value: chall-manager-svc:8080

Finally, deploy them all.

kubectl apply -f target-namespace.yaml \

-f role.yaml -f service-account.yaml -f role-binding.yaml \

-f netpol-deny-all.yaml -f netpol-inter-ns.yaml -f netpol-dns.yaml -f netpol-internet.yaml \

-f pvc.yaml -f deployment.yaml -f service.yaml \

-f cronjob.yaml

Docker

Warning

This mode does not cover the deployment of the janitor.To deploy the docker container on a host machine, run the following. It will come with a limited set of features, thus will need additional configurations for the Pulumi providers to communicate with their targets.

docker run -p 8080:8080 -v ./data:/etc/chall-manager ctferio/chall-manager:v1.0.0

For the janitor, you may use a cron service on your host machine. In this case, you may also want to create a specific network to isolate them from other adjacent services.

Binary

Security

We highly discourage the use of this mode as it does not guarantee proper isolation. The chall-manager is basically a RCE-as-a-Service carrier, so if you run this on your host machine, prepare for dramatic issues.Warning

This mode does not cover the deployment of the janitor.To deploy the binary on a host machine, run the following. It will come with a limited set of features, thus will need additional configurations for the Pulumi providers to communicate with their targets.

# Download the binary from https://github.com/ctfer-io/chall-manager/releases, then run it

./chall-manager

For the janitor, you may use a cron service on your host machine.

1.1.2 - Monitoring

Once in production, the chall-manager provides its functionalities to the end-users.

But production can suffer from a lot of disruptions: network latencies, interruption of services, an unexpected bug, chaos engineering going a bit too far… How can we monitor the chall-manager to make sure everything goes fine ? What to monitor to quickly understand what is going on ?

Metrics

A first approach to monitor what is going on inside the chall-manager is through its metrics.

Warning

Metrics are exported by the OTLP client. If you did not configure an OTEL Collector, please refer to the deployment documentation.| Name | Type | Description |

|---|---|---|

challenges |

int64 |

The number of registered challenges. |

instances |

int64 |

The number of registered instances. |

You can use them to build dashboards, build KPI or anything else. They can be interesting for you to better understand the tendencies of usage of chall-manager through an event.

Tracing

A way to go deeper in understanding what is going on inside chall-manager is through tracing.

First of all, it will provide you information of latencies in the distributed locks system and Pulumi manipulations. Secondly, it will also provide you Service Performance Monitoring (SPM).

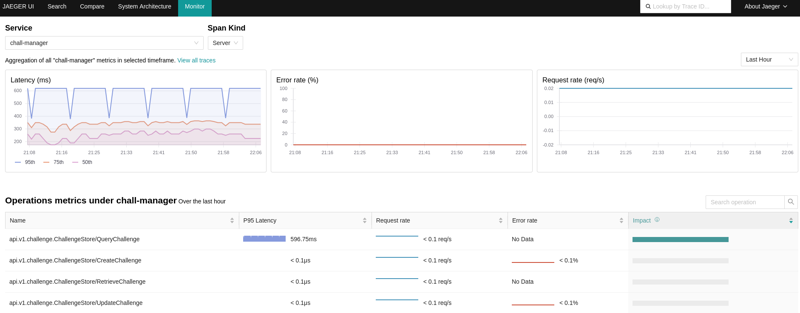

Using the OpenTelemetry Collector, you can configure it to produce RED metrics on the spans through the spanmetrics connector. When a Jaeger is bound to both the OpenTelemetry Collector and the Prometheus containing the metrics, you can monitor performances AND visualize what happens.

An example view of the Service Performance Monitoring in Jaeger, using the OpenTelemetry Collector and Prometheus server.

Through the use of those metrics and tracing capabilities, you could build alerts thresholds and automate responses or on-call alerts with the alertmanager.

A reference architecture to achieve this description follows.

graph TD

subgraph Monitoring

COLLECTOR["OpenTelemetry Collector"]

PROM["Prometheus"]

JAEGER["Jaeger"]

ALERTMANAGER["AlertManager"]

GRAFANA["Grafana"]

COLLECTOR --> PROM

JAEGER --> COLLECTOR

JAEGER --> PROM

ALERTMANAGER --> PROM

GRAFANA --> PROM

end

subgraph Chall-Manager

CM["Chall-Manager"]

CMJ["Chall-Manager-Janitor"]

ETCD["etcd cluster"]

CMJ --> CM

CM --> |OTLP| COLLECTOR

CM --> ETCD

end

1.2 - ChallMaker Guides

1.2.1 - Create a Scenario

You are a ChallMaker or only curious ? You want to understand how the chall-manager can spin up challenge instances on demand ? You are at the best place for it then.

This tutorial will be split up in three parts:

Design your Pulumi factory

We call a “Pulumi factory” a golang code or binary that fits the chall-manager scenario API. For details on this API, refer to the SDK documentation.

The requirements are:

Create a directory and start working in it.

mkdir my-challenge

cd $_

go mod init my-challenge

First of all, you’ll configure your Pulumi factory. The example below constitutes the minimal requirements, but you can add more configuration if necessary.

Pulumi.yaml

name: my-challenge

runtime: go

description: Some description that enable others understand my challenge scenario.

Then create your entrypoint base.

main.go

package main

import (

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

)

func main() {

pulumi.Run(func(ctx *pulumi.Context) error {

// Scenario will go there

return nil

})

}

You will need to add github.com/pulumi/pulumi/sdk/v3/go to your dependencies: execute go mod tidy.

Starting from here, you can get configurations, add your resources and use various providers.

For this tutorial, we will create a challenge consuming the identity from the configuration and create an Amazon S3 Bucket. At the end, we will export the connection_info to match the SDK API.

main.go

package main

import (

"github.com/pulumi/pulumi-aws/sdk/v6/go/aws/s3"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi/config"

)

func main() {

pulumi.Run(func(ctx *pulumi.Context) error {

// 1. Load config

cfg := config.New(ctx, "my-challenge")

config := map[string]string{

"identity": cfg.Get("identity"),

}

// 2. Create resources

_, err := s3.NewBucketV2(ctx, "example", &s3.BucketV2Args{

Bucket: pulumi.String(config["identity"]),

Tags: pulumi.StringMap{

"Name": pulumi.String("My Challenge Bucket"),

"Identity": pulumi.String(config["identity"]),

},

})

if err != nil {

return err

}

// 3. Export outputs

// This is a mockup connection info, please provide something meaningfull and executable

ctx.Export("connection_info", pulumi.String("..."))

return nil

})

}

Don’t forget to run go mod tidy to add the required Go modules. Additionally, make sure to configure the chall-manager pods to get access to your AWS configuration through environment variables, and add a Provider configuration in your code if necessary.

Tips & Tricks

You can compile your code to make the challenge creation/update faster, but chall-manager will automatically do it anyway to enhance performances (avoid re-downloading Go modules and Pulumi providers, and compile the scenario).

Such build could be performed through CGO_ENABLED=0 go build -o main path/to/main.go.

Add the following configuration in your Pulumi.yaml file to consume it, and set the binary path accordingly to the filesystem.

runtime:

name: go

options:

binary: ./main

You can test it using the Pulumi CLI with for instance the following.

pulumi stack init # answer the questions

pulumi up # preview and deploy

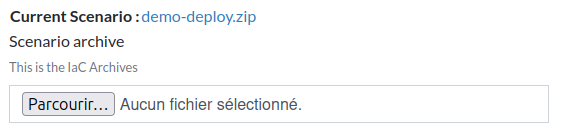

Make it ready for chall-manager

Now that your scenario is designed and coded accordingly to your artistic direction, you have to prepare it for the chall-manager to receive it. Make sure to remove all unnecessary files, and zip the directory it is contained within.

cd ..

zip -r my-challenge.zip ./my-challenge/*

And you’re done. Yes, it was that easy :)

But it could be even more using the SDK !

1.2.2 - Software Development Kit

When you (a ChallMaker) want to deploy a single container specific for each source, you don’t want to understand how to deploy it to a specific provider. In fact, your technical expertise does not imply you are a Cloud expert… And it was not to expect ! Writing a 500-lines long scenario fitting the API only to deploy a container is a tedious job you don’t want to do more than once: create a deployment, the service, possibly the ingress, have a configuration and secrets to handle…

For this reason, we built a Software Development Kit to ease your use of chall-manager. It contains all the features of the chall-manager without passing you the issues of API compliance.

Additionnaly, we prepared some common use-cases factory to help you focus on your CTF, not the infrastructure:

The community is free to create new pre-made recipes, and we welcome contributions to add new official ones. Please open an issue as a Request For Comments, and a Pull Request if possible to propose an implementation.

Build scenarios

Fitting the chall-manager scenario API imply inputs and outputs.

Despite it not being complex, it still requires work, and functionalities or evolutions does not guarantee you easy maintenance: offline compatibility with OCI registry, pre-configured providers, etc.

Indeed, if you are dealing with a chall-manager deployed in a Kubernetes cluster, the ...pulumi.ResourceOption contains a pre-configured provider such that every Kubernetes resources the scenario will create, they will be deployed in the proper namespace.

Inputs

Those are fetchable from the Pulumi configuration.

| Name | Required | Description |

|---|---|---|

identity |

✅ | the identity of the Challenge on Demand request |

Outputs

Those should be exported from the Pulumi context.

| Name | Required | Description |

|---|---|---|

connection_info |

✅ | the connection information, as a string (e.g. curl http://a4...d6.my-ctf.lan) |

flag |

❌ | the identity-specific flag the CTF platform should only validate for the given source |

Kubernetes ExposedMonopod

When you want to deploy a challenge composed of a single container, on a Kubernetes cluster, you want it to be fast and easy.

Then, the Kubernetes ExposedMonopod fits your needs ! You can easily configure the container you are looking for and deploy it to production in the next seconds.

The following shows you how easy it is to write a scenario that creates a Deployment with a single replica of a container, exposes a port through a service, then build the ingress specific to the identity and finally provide the connection information as a curl command.

main.go

package main

import (

"github.com/ctfer-io/chall-manager/sdk"

"github.com/ctfer-io/chall-manager/sdk/kubernetes"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

)

func main() {

sdk.Run(func(req *sdk.Request, resp *sdk.Response, opts ...pulumi.ResourceOption) error {

cm, err := kubernetes.NewExposedMonopod(req.Ctx, &kubernetes.ExposedMonopodArgs{

Image: "myprofile/my-challenge:latest",

Port: 8080,

ExposeType: kubernetes.ExposeIngress,

Hostname: "brefctf.ctfer.io",

Identity: req.Config.Identity,

}, opts...)

if err != nil {

return err

}

resp.ConnectionInfo = pulumi.Sprintf("curl -v https://%s", cm.URL)

return nil

})

}

Requirements

To use ingresses, make sure your Kubernetes cluster can deal with them: have an ingress controller (e.g. Traefik), and DNS resolution points to the Kubernetes cluster.

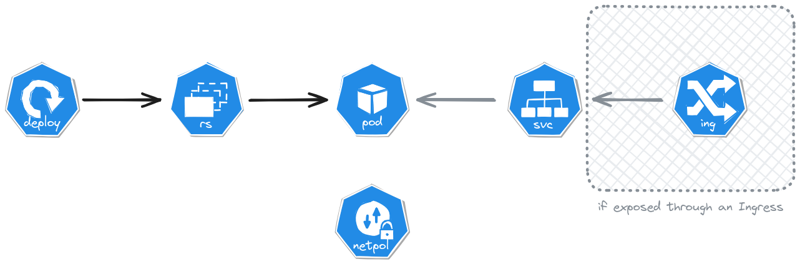

The Kubernetes ExposedMonopod architecture for deployed resources.

1.2.3 - Update in production

So you have a challenge that made its way to production, but it contains a bug or an unexpected solve ? Yes, we understand your pain: you would like to patch this but expect services interruption… It is not a problem anymore !

A common worklow of a challenge fix happening in production.

We adopted the reflexions of The Update Framework to provide infrastructure update mecanisms with different properties.

What to do

You will have to update the scenario, of course. Once it is fixed and validated, archive the new version.

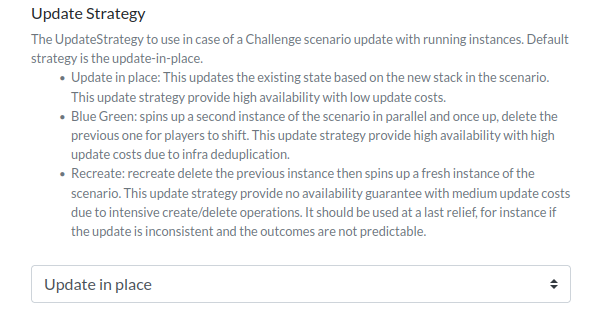

Then, you’ll have to pick up an Update Strategy.

| Update Strategy | Require Robustness¹ | Time efficiency | Cost efficiency | Availability | TL;DR; |

|---|---|---|---|---|---|

| Update in place | ✅ | ✅ | ✅ | ✅ | Efficient in time & cost ; require high maturity |

| Blue-Green | ❌ | ✅ | ❌ | ✅ | Efficient in time ; costfull |

| Recreate | ❌ | ❌ | ✅ | ❌ | Efficient in cost ; time consuming |

¹ Robustness of both the provider and resources updates.

More information on the selection of those models and how they work internally is available in the design documentation.

You’ll only have to update the challenge, specifying the Update Strategy of your choice. Chall-Manager will temporarily block operations on this challenge, and update all existing instances. This makes the process predictible and reproductible, thus you can test in a pre-production environment before production. It also avoids human errors during fix, and lower the burden at scale.

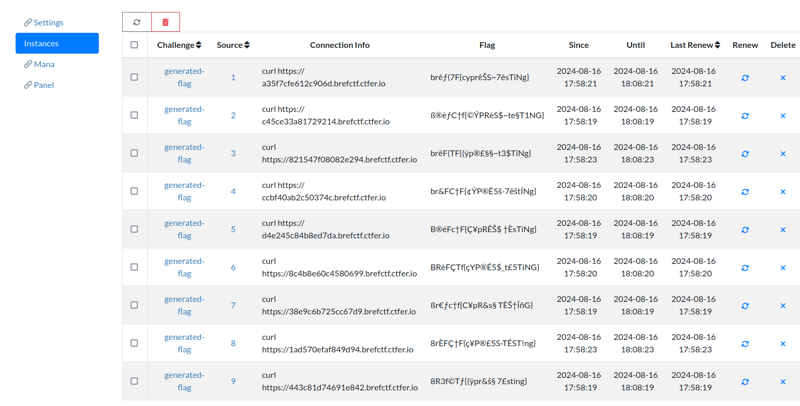

1.2.4 - Use the flag variation engine

Shareflag is considered by some as the worst part of competitions leading to unfair events, while some others consider this a strategy. We consider this a problem we could solve.

Context

In “standard” CTFs as we could most see them, it is impossible to solve this problem: if everyone has the same binary to reverse-engineer, how can you differentiate the flag per each team thus avoid shareflag ?

For this, you have to variate the flag for each source. One simple solution is to use the SDK.

Use the SDK

The SDK can variate a given input with human-readable equivalent characters in the ASCII-extended charset, making it handleable for CTF platforms (at least we expect it). If one character is out of those ASCII-character, it will be untouched.

To import this part of the SDK, execute the following.

go get github.com/ctfer-io/chall-manager/sdk

Then, in your scenario, you can create a constant that contains the “base flag” (i.e. the unvariated flag).

const flag = "my-supper-flag"

Finally, you can export the variated flag.

package main

import (

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

"github.com/ctfer-io/chall-manager/sdk"

)

const flag = "my-supper-flag"

func main() {

sdk.Run(func(req *sdk.Request, resp *sdk.Response, opts ...pulumi.ResourceOption) error {

// ...

resp.ConnectionInfo = pulumi.String("...").ToStringOutput()

resp.Flag = pulumi.Sprintf("BREFCTF{%s}", sdk.VariateFlag(req.Config.Identity, flag))

return nil

})

}package main

import (

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi/config"

"github.com/ctfer-io/chall-manager/sdk"

)

const flag = "my-supper-flag"

func main() {

pulumi.Run(func(ctx *pulumi.Context) error {

// 1. Load config

cfg := config.New(ctx, "no-sdk")

config := map[string]string{

"identity": cfg.Get("identity"),

}

// 2. Create resources

// ...

// 3. Export outputs

ctx.Export("connection_info", pulumi.String("..."))

ctx.Export("flag", pulumi.Sprintf("BREFCTF{%s}", sdk.VariateFlag(config["identity"], flag)))

return nil

})

}If you want to use decorator around the flag (e.g. BREFCTF{}), don’t put it in the flag constant else it will be variated.

1.3 - Developer Guides

1.3.1 - Entensions

How can we extend chall-manager capabilities ? You cannot, in itself: there is no support for live-mutability of functionalities, plugins, nor there will be (immutability is both an operation and security principle, determinism is a requirement).

But as chall-manager is designed as a Micro Service, you only have to reuse it !

Hacking chall-manager API

Taking a few steps back, you can abstract the chall-manager API to fit your needs:

- the

connection_infois an Output data from the instance to the player. - the

flagis an optional Output data from the instance to the backend. Then, if you want to pass additional data, you can use those commmunications buses.

Case studies

Follows some extension case studies.

MultiStep & MultiFlags

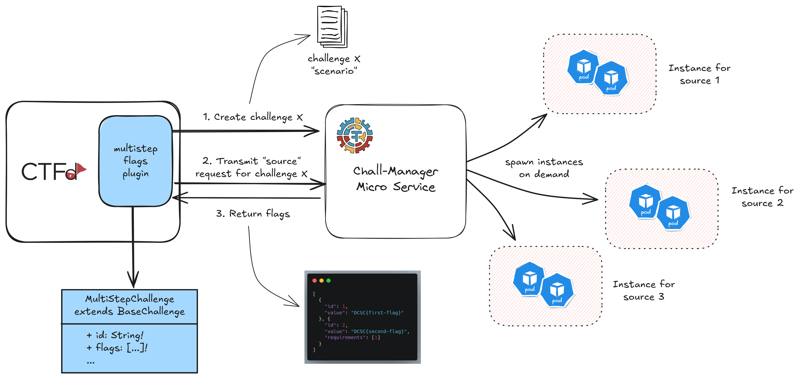

The original idea comes from the JointCyberRange, the following architecture is our proposal to solve the problem. In the “MultiStep challenge” problem they would like to have Jeopardy-style challenges constructed as a chain of steps, where each step has its own flag. To completly flag the challenge, the player have to get all flags in the proper order. Their target environment is an integration in CTFd as a plugin, and challenges deployed to Kubernetes.

Deploying those instances, isolating them, janitoring if necessary are requirements, thus would have been reimplemented. But chall-manager can deploy scenarios to whatever environment. Our proposal is then to cut the problem in two parts according to the Separation of Concerns Principle:

- a CTFd plugin that implement a new challenge type, and communicate with chall-manager

- chall-manager to deploy the instances

The connection_info can be unchanged from its native usage in chall-manager.

The flag output could contain a JSON object describing the flags chain and internal requirements.

Our suggested architecture for the JCR MultiStep challenge plugin for CTFd.

Through this architecture, JCR would be able to fit their needs shortly by capitalizing on the chall-manager capabilities, thus extend its goals. Moreover, it would enable them to provide the community a CTFd plugin that does not only fit Kubernetes, thanks to the genericity.

1.3.2 - Integrate with a CTF platform

So you want to integrate chall-manager with a CTF platform ? Good job, you are contributing to the CTF ecosystem !

Here are the known integrations in CTF platforms:

The design

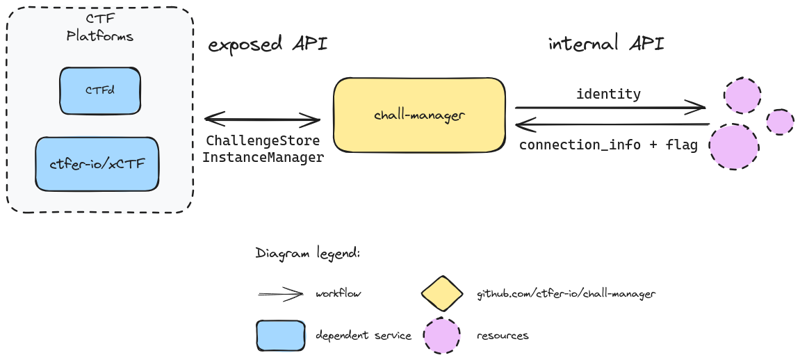

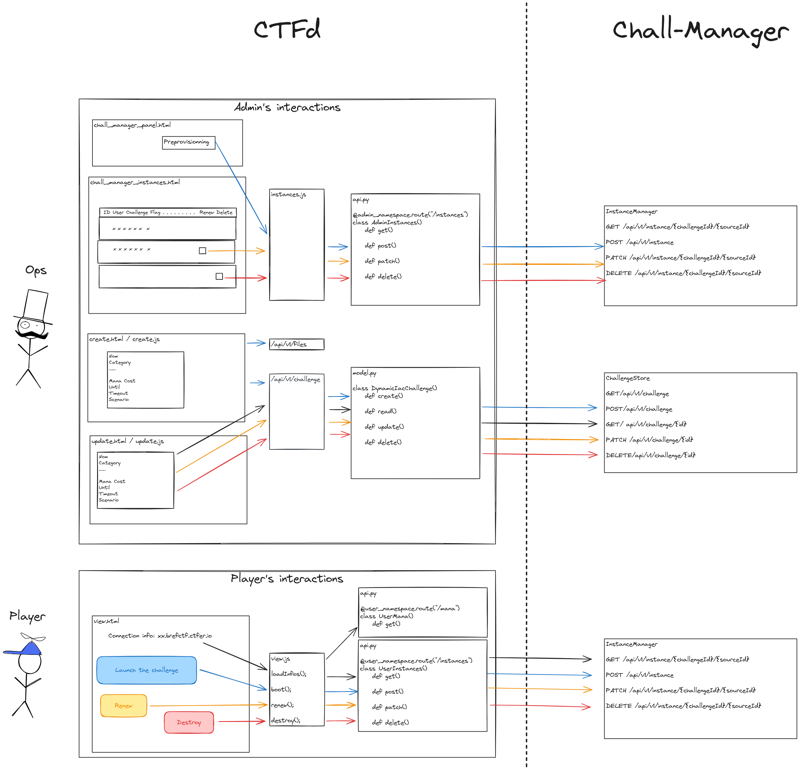

The API is split in two services:

- the

ChallengeStoreto handle the CRUD operations on challenges (ids, scenarios, etc.). - the

InstanceManagerto handle players CRUD operations on instances.

You’ll have to handle both services as part of the chall-manager API if you want proper integration.

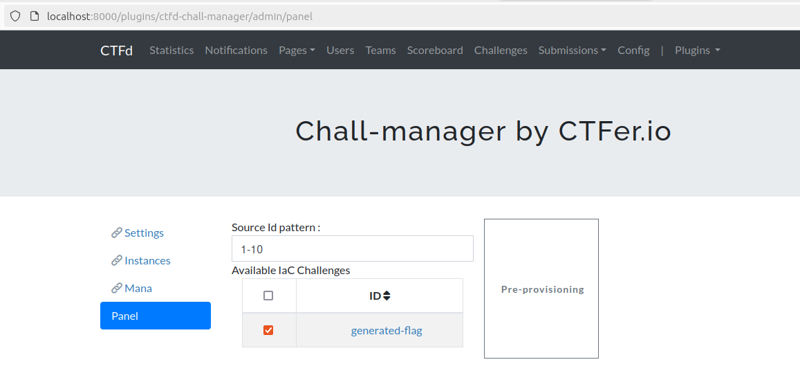

We encourage you to add additional efforts around this integration, with for instance:

- management views to monitor which challenge is started for every players.

- pre-provisionning to better handle load spikes at the beginning of the event.

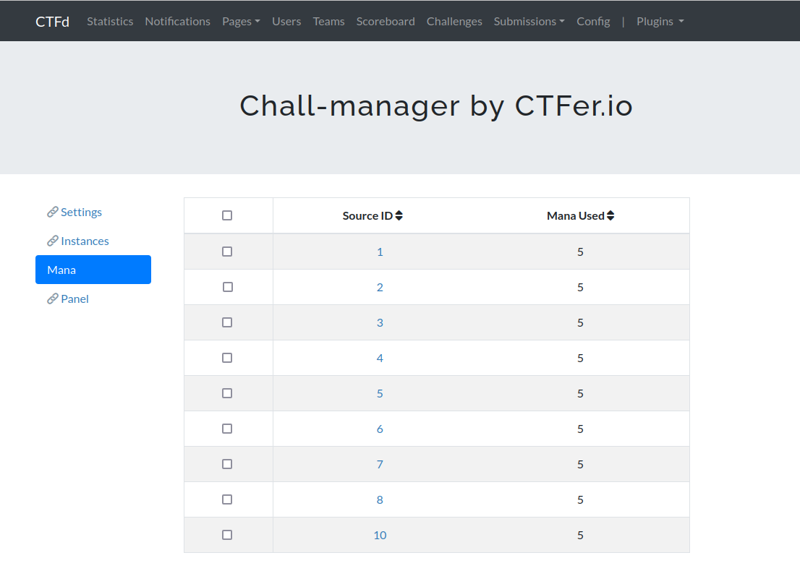

- add rate limiting through a mana.

- the support of OpenTelemetry for distributed tracing.

Use the proto

The chall-manager was conceived using a Model-Based Systems Engineering practice, so API models (the contracts) were written, and then the code was generated.

This makes the .proto files the first-class citizens you may want to use in order to integrate chall-manager to a CTF platform.

Those could be found in the subdirectories here. Refer the your proto-to-code tool for generating a client from those.

If you are using Golang, you can directly use the generated clients for the ChallengeStore and InstanceManager services API.

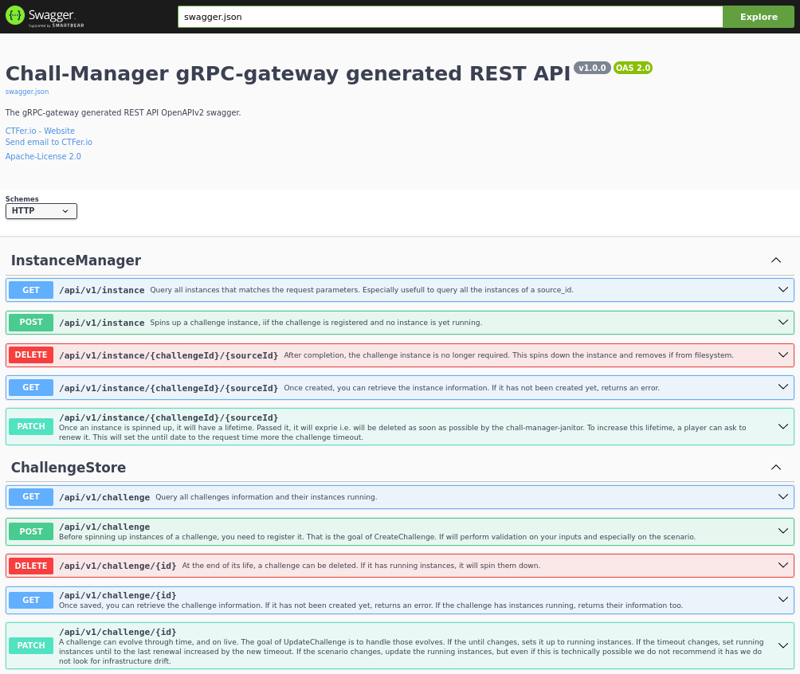

If you cannot or don’t want to use the proto files, you can use the gateway API.

Use the gateway

Because some languages don’t support gRPC, or you don’t want to, you can simply communicate with chall-manager through its JSON REST API.

To access this gateway, you have to start your chall-manager with the proper configuration:

- either

--gwas an arg orGATEWAY=trueas a varenv - either

--gw-swaggeras an arg orGATEWAY_SWAGGER=trueas a varenv

You can then reach the Swagger at http://my-chall-manager:9090/swagger/#, which should show you the following.

The chall-manager REST JSON API Swagger.

Use this Swagger to understand the API, and build your language-specific client in order to integrate chall-manager. We do not provide official language-specific REST JSON API clients.

1.4 - Design

In this documentation chapter, we explain what led us to design the Chall-Manager as such. We go from the CTF limitations, the Challenge on Demand problem, the functionalities, the genericity of our approach, how we made sure of its usability, to the innovative testing strategy.

1.4.1 - Context

A Capture The Flag (CTF) is an event that brings a set of players together, to challenge themselves and others on domain-specific problems. Those events could have for objective learning, or made for competing with cashprizes. They could be hold physically, virtually or hybrid.

CTFs are largely adopted in the cybersecurity community, taking place all over the world on a daily basis. In this community, plenty domains are played: web, forensic, Active Directory, cryptography, game hacking, telecommunications, steganography, mobile, OSINT, reverse engineering, programming, etc.

In general, a challenge is composed of a name, has a description, a set of hints, files and other data, shared for all. On top of those, the competition runs over points displayed on scoreboards: this is how people keep getting entertained throughout a continuous and hours-long rush. Most of the challenges find sufficient solutions to their needs with those functionalities, perhaps some does not…

If we consider other aspects of cybersecurity -as infrastructures, telecommunications, satellites, web3 and others- those solutions are not sufficient. They need specific deployments strategies, and are costfull to deploy even only once.

Nevertheless, with the emergence of the Infrastructure as Code paradigm, we think of infrastructures as reusable components, composing with pieces like for a puzzle. Technologies appeared to embrace this new paradigm, and where used by the cybersecurity community to build CTF infrastructures. Players could then share infrastructures to play those categories.

In reality, yes they could. But, de facto, they share the same infrastructure. If you are using a CTF to select the top players for a larger event, how would you be able to determine who performed better ? How do you assure their success is due to their sole effort and not a side effect of someone else work ? In the opposite direction, if you are a player who is loosing its mind on a challenge, you won’t be glad that someone broke the entire challenge thus your efforts are wortheless, isn’t it ?

What’s next ?

Read The Need to clarify the necessity of Challenge on Demand.

1.4.2 - The Need

“Sharing is caring” they said… they were wrong. Sometimes, isolating things can get spicy, but it implies replicating infrastructures. If someone soft-locks its challenge, it would be its entire problem: it won’t affect other players experience. Furthermore, you could then imagine a network challenge where your goal is to chain a vulnerability to a Man-in-the-Middle attack to get access to a router and spy on communications to steal a flag ! Or a small company infrastructure that you would have to break into and get an administrator account ! And what if the infrastructure went down ? Then it would be isolated, other players could still play in their own environment. This idea can be really productive, enhance the possibilities of a CTF and enable realistic -if not realism- attacks to take place in a controlled manner.

This is the Challenge on Demand problem: giving every player or team its own instance of a challenge, isolated, to play complex scenarios. With a solution to this problem, players and teams could use a brand new set of knowledges they could not until this: pivoting from an AWS infrastructure to an on-premise company IT system, break into a virtual bank vault to squeeze out all the belongings, hack their own Industrial Control System or IoT assets… A solution to this problem would open a myriad possibilites.

The existing solutions

As it is a widespread known limitation in the community, people tried to solve the problem. They conceived solutions that would fit their need, considering a set of functionalities and requirements, then built, released and deployed them successfully.

Some of them are famous:

- CTFd whale is a CTFd plugin able to spin up Docker containers on demand.

- CTFd owl is an alternative to CTFd whale, less famous.

- KubeCTF is another CTFd plugin made to spin up Kubernetes environments.

- Klodd is a rCTF service also made to spin up Kubernetes environments.

Nevertheless, they partially solved the root problem: those solutions solved the problem in a context (Docker, Kubernetes), with a Domain Specific Language (DSL) that does not guarantee non-vendor-lock-in nor ease use and testing, and lack functionalities such as Hot Update.

An ideal solution to this problem require:

- the use of a programmatic language, not a DSL (non-vendor-lock-in and functionalities)

- the capacity to deploy an instance without the solution itself (non-vendor-lock-in)

- the capacity to use largely-adopted technologies (e.g. Terraform, Ansible, etc., for functionalities)

- the genericity of its approach to avoid re-implementing the solution for every service provider (functionalities)

There were no existing solutions that fits those requirements… Until now.

Grey litterature survey

Follows an exhaustive grey litterature survey on the solutions made to solve the Challenge on Demand problem. To enhance this one, please open an issue or a pull request, we would be glad to improve it !

| Service | CTF platform | Genericity | Technical approach | Scalable |

|---|---|---|---|---|

| CTFd whale | CTFd | ❌ | Docker socket | ❌¹ |

| CTFd owl | CTFd | ❌ | Docker socket | ❌¹ |

| KubeCTF | Agnostic, CTFd plugin | ❌ | Use Kubernetes API and annotations | ✅ |

| Klodd | rCTF | ❌ | Use Kubernetes CRDs and a microservice | ✅ |

| HeroCTF's deploy-dynamic | Agnostic | ❌ | API wrapper around Docker socket | ❌¹ |

| 4T$'s I | CTFd | ❌ | Use Kubernetes CRDs and a microservice | ✅ |

¹ not considered scalable as it reuses a Docker socket thus require a whole VM. As such a VM is not scaled automatically (despite being technically feasible), the property aligns with the limitation.

Classification for Scalable:

- ✅ partially or completfully scalable. Classification does not goes further on criteria as the time to scale, autoscaling, load balancing, anticipated scaling, descaling, etc.

- ❌ only one instance is possible.

Litterature

More than a technical problem, the chall-manager also provides a solution to a scientific problem. In previous approaches for cybersecurity competitions, many referred to an hypothetic generic approach to the Challenge on Demand problem. None of them introduced a solution or even a realistic approach, until ours.

In those approaches to Challenge on Demand, we find:

- “PAIDEUSIS: A Remote Hybrid Cyber Range for Hardware, Network, and IoT Security Training”, Bera et al. (2021) provide Challenge on Demand for Hardware systems (Industrial Control Systems, FPGA).

- “Design of Remote Service Infrastructures for Hardware-based Capture-the-Flag Challenges”, Marongiu and Perra (2021) related to PAIDEUSIS, with the foundations for hardware-based Challenge on Demand.

- “Lowering the Barriers to Capture the Flag administration and Participation”, Kevin Chung (2017) in which it is a limitation of CTFd, where picoCTF has an “autogen” challenge feature.

- “Automatic Problem Generation for Capture-the-Flag Competitions”, Burket et al. (2015) discusses the problem of generating challenges on demand, regarding fairness evaluation for players.

- “Scalable Learning Environments for Teaching Cybersecurity Hands-on”, Vykopal et al. (2021) discusses the problem of creating cybersecurity environments, similarly to Challenge on Demand, and provide guidelines for production environments at scale.

- “GENICS: A framework for Generating Attack Scenarios for Cybersecurity Exercices on Industrial Control Systems”, Song et al. (2024) formulates a process to generate attack scenario applicable in a CTF context, technically possible through approaches like PAIDEUSIS.

Conclusions

Even if there are some solutions developed to help the community deal with the Challenge on Demand problem, we can see many limitations: only Docker and Kubernetes are covered, and none is able to provide the required genericity to consider other providers, even custom ones.

A production-ready solution would enable the community to explore new kinds of challenges, both technical and scientific.

This is why we created chall-manager: provide a free, open-source, generic, non-vendor-lock-in and ready-for-production solution. Feel free to build on top of it. Change the game.

What’s next ?

How we tackled down the complexity of building this system, starting from the architecture.

1.4.3 - Genericity

While trying to find a generic approach to deploy any infrastructure with a non-vendor-lock-in API, we looked at existing approaches. None of them proposed such an API, so we had to pave the way to a new future. But we did not know how to do it.

One day, after deploying infrastructures with Victor, we realised it was the solution. Indeed, Victor is able to deploy any Pulumi stack. This imply that the solution was already before our eyes: a Pulumi stack.

This consist the genericity layer, as easy as this.

Pulumi as the solution

To go from theory to practice, we had to make choices. One of the problem with a large genericity is it being… large, actually.

If you consider all ecosystems covered by Pulumi, to cover them you’ll require all runtimes installed on the host machine. For instance, the Pulumi Docker image is around 1.5 GB. This imply that a generic solution covering all ecosystems would be around 2 GB of memory.

Moreover, the enhancements you can propose in a language would have to be re-implemented similarly in every language, or transpiled. As transpilation is a heavy task, either manual or automatic but with a high error rate, it is not suitable for production.

Our choice was to focus on one language first (Golang), and later permit transpilation in other languages if technically automatable with a high success rate. With this choice, we would only have to deal with the Pulumi Go Docker image, around 200 MB (a 7.5 reduction factor). It could be even more reduced using minified images, using the Slim Toolkit or Chainguard Apko.

From the idea to an actual tool

With those ideas in mind, we had to transition from TRLs by implementing it in a tool. This tool could provide a service, thus the architecture was though as a Micro Service.

Doing so enable other Micro Services or CTF platforms to be developed and reuse the capabilities of chall-manager. We can then imagine plenty other challenges kind that would require Challenge on Demand:

- King of the Hill

- Attack & Defense (1 vs 1, 1 vs n, 1 vs bot)

- MultiSteps & MultiFlags (for Jeopardy)

We already plan creating 1 and 2.

What’s next

- Understand the Architecture of the microservice.

1.4.4 - Architecture

In the process of architecting a microservice, a first thing is to design the API exposed to the other microservices. Once this done, you implement it, write the underlying software that provides the service, and think of its deployment architecture to fulfill the goals.

API

We decided to avoid API conception issues by using a Model Based Systems Engineering method, to focus on the service to provide. This imply less maintenance, an improved quality and stability.

This API revolve around a simplistic class diagram as follows.

classDiagram

class Challenge {

+ id: String!

+ hash: String!

+ timeout: Duration

+ until: DateTime

}

class Instance {

+ id: String!

+ since: DateTime!

+ last_renew: DateTime!

+ until: DateTime

+ connection_info: String!

+ flag: String

}

Challenge "*" *--> "1" Instance: instances

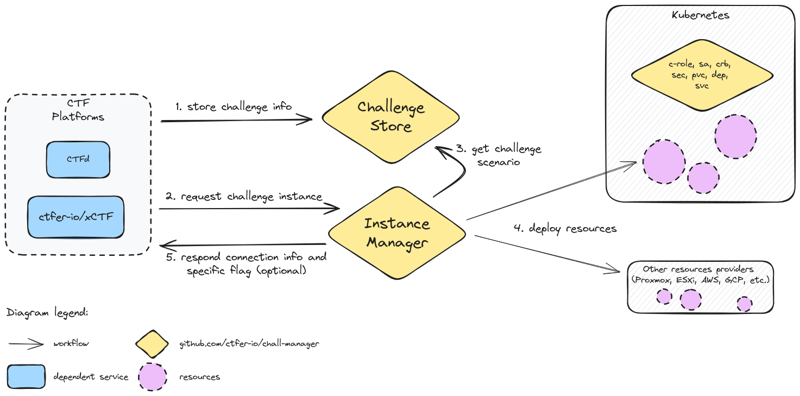

Given those two classes, we applied the Separation of Concerns Principle and split it in two cooperating services:

- the

ChallengeStoreto handle the challenges configurations - the

InstanceManagerto be the carrier of challenge instances on demand

Then, we described their objects and methods in protobuf, and using buf we generated the Golang code for those services. The gRPC API would then be usable by any service that would want to make use of the service.

Additionally, for documentation and ease of integration, we wanted a REST JSON API: through a gateway and a swagger, that was performed still in a code-generation approach.

Software

Based on the generated code, we had to implement the functionalities to fulfill our goals.

Functionalities and interactions of the chall-manager services, from a software point of view.

Nothing very special here, it was basically features implementation. This was made possible thanks to the Pulumi API that can be manipulated directly as a Go module with the auto package.

Notice we decided to avoid depending on a file-database such as an S3-compatible (AWS S3 or MinIO) as those services may not be compatible either to offline contexts or business contexts (due to the MinIO license being GNU AGPL-v3.0).

Nevertheless, we required a solution for distributed locking systems that would not fit into chall-manager. For this, we choosed etcd. File storage replication would be handled by another solution like Longhorn or Ceph. This is further detailed in High-Availability.

In our design, we deploy an etcd instance rather than using the Kubernetes already existing one. By doing so, we avoid deep integration of our proposal into the cluster which enables multiple instances to run in parallel inside an already existing cluster. Additionnaly, it avoids innapropriate service intimacy and shared persistence issues described as good development practices in Micro Services architectures by Taibi et al. (2020) and Bogard (2017).

Deployment

Then, multiple deployment targets could be discussed. As we always think of online, offline and on-premise contexts, we wanted to be able to deploy on a non-vendor-specific hypervisor everyone would be free to use. We choosed Kubernetes.

To lift the requirements and goals of the software, we had to deploy an etcd cluster, a deployment for chall-manager and a cronjob for the janitor.

The Kubernetes deployment of a chall-manager.

One opportunity of this approach is, as chall-manager is as close as possible to the Kubernetes provider (intra-cluster), it will be easily feasible to manage resources in it. Using this, ChallMaker and Ops could deploy instances on Demand with a minimal effort. This is the reason why we recommend an alternative deployment in the Ops guides, to be able to deploy challenges in the same Kubernetes cluster.

Exposed vs. Scenario

Finally, another aspect of deployment is the exposed and scenario APIs.

The exposed one has been previously described, while the scenario one applies on scenarios once executed by the InstanceManager.

The exposed and scenario APIs.

This API will be described in the Software Development Kit.

What’s next ?

In the next section, you will understand how data consistency is performed while maintaining High Availability.

1.4.5 - High Availability

When designing an highly available application, the Availability and Consistency are often deffered to the database layer. This database explains its tradeoff(s) according to the CAP theorem. Nevertheless, chall-manager does not use a database for simplicity of use.

First of all, some definitions:

- Availability is the characteristic of an application to be reachable to the final user of services.

- High Availability contains Availability, with minimal interruptions of services and response times as low as possible.

To perform High Availability, you can use update strategies such as a Rolling Update with a maximum unavailability rate, replicate instances, etc. But it depends on a property: the application must be scalable (many instances in parallel should not overlap in their workloads if not designed so), have availability and consistency mecanisms integrated.

One question then arise: how do we assure consistency with a maximal availability ?

Fallback

As the chall-manager should scale, the locking mecanism must be distributed. In case of a network failure, it imply that whatever the final decision, the implementation should provide a recovery mecanism of the locks.

Transactions

A first reflex would be to think of transactions. They guarantee data consistency, so would be good candidates. Nevertheless, as we are not using a database that implements it, we would have to design and implement those ourselves. Such an implementation is costfull and should only happen when reusability is a key aspect. As it is not the case here (specific to chall-manager), it was a counter-argument.

Mutex

Another reflex would be to think of (distributed) mutexes. Through a mutual exclusion, we would be sure to guarantee data consistency, but nearly no availability. Indeed, a single mutex would lock out all incoming requests until the operation is completly performed. Even if it would be easy to implement, it does not match our requirements.

Such implementation would look like the following.

sequenceDiagram

Upstream ->> API: Request

API ->>+ Challenge: Lock

Challenge ->> Challenge: Perform CRUD operation

Challenge ->>- API: Unlock

API ->> Upstream: Response

Multiple mutex

We need something finer than (distributed) mutex: if a challenge A is under a CRUD operation, we don’t need challenge B to not be able to handle another CRUD operation !

We can imagine one (distributed) mutex per challenge such that they won’t stuck one another. Ok, that’s fine… But what about instances ?

The same problem arise, the same solution: we can construct a chain of mutexes such that to perform a CRUD operation on an Instance, we lock the Challenge first, then the Instance, unlock the Challenge, execute the operation, and unlock the Instance. An API call from an upstream source or service is represented with this strategy as follows.

sequenceDiagram

Upstream ->> API: Request

API ->>+ Challenge: Lock

Challenge ->> Instance: Lock

Challenge ->>- API: Unlock

API ->>+ Instance: Request

Instance ->> Instance: Perform CRUD operation

Instance ->>- Challenge: Unlock

Instance ->> API: Response

API ->> Upstream: Response

One last thing, what if we want to query all challenges information (to build a dashboard, janitor outdated instances, …) ?

We would need a “Stop The World"-like mutex from which every challenge mutex would require context-relock before operation. To differenciate this from the Garbage Collector ideas, we call this a “Top-of-the-World” aka TOTW.

The previous would now become the following.

sequenceDiagram

Upstream ->> API: Request

API ->>+ TOTW: Lock

TOTW ->>+ Challenge: Lock

TOTW ->>- API: Unlock

Challenge ->> Instance: Lock

Challenge ->>- TOTW: Unlock

API ->>+ Instance: Request

Instance ->> Instance: Perform CRUD operation

Instance ->>- Challenge: Unlock

Instance ->> API: Response

API ->> Upstream: Response

That would guarantee consistency of the data while having availability on the API resources. Nevertheless, this availability is not high availability: we could enhance further.

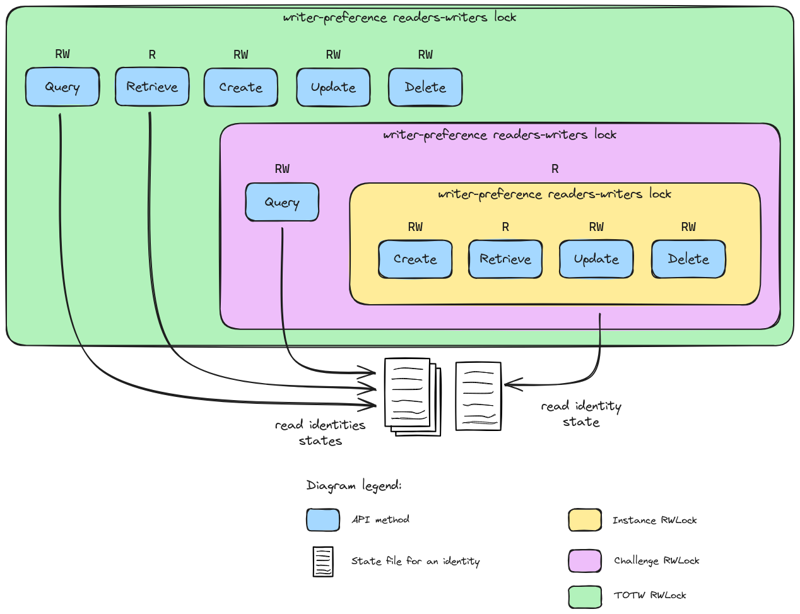

Writer-Preference Reader-Writer Distributed Lock

All CRUD operations are not equal, and can be split in two groups:

- reading (Query, Read)

- writing (Create, Update, Delete).

The reading operations does not affect the state of an object, while writing ones does. Moreover, in the case of chall-manager, reading operations are nearly instantaneous and writing ones are at least 10-seconds long. How to deal with those unbalanced operations ?

As soon as discussions on OS began, researchers worked on the similar question and found solutions. They called this one the “reader-writer problem”.

In our specific case, we want writer-preference as they would largely affect the state of the resources. Using the Courtois et al. (1971) second problem solution for writer-preference reader-writer solution, we would need 5 locks and 2 counters.

For the technical implementation, we have multiple solutions: etcd, redis or valkey. We decided to choose etcd because it was already used by Kubernetes, and the etcd client v3 already implement mutex and counters.

The triple chain of writer-preference reader-writer distributed locks.

With this approach, we could ensure data consistency throughout all replicas of chall-manager, and high-availability.

CRDT

Can a Conflict-Free Replicated data Type have been a solution ?

In our case, we are not looking for eventual consistency, but strict consistency. Moreover, using CRDT is costfull in development, integration and operation, so if avoidable they should be. CRDT are not the best tool to use here.

What’s next ?

Based on the guarantee of consistency and high availability, inform you on the other major problem: Hot Update.

1.4.6 - Hot Update

When a challenge is affected by a bug leading to the impossibility of solving it, or if an unexpected solve is found, you will most probably want it fixed for fairness.

If this happens on challenge with low requirements, you will fix the description, files, hints, etc. But what about challenges that require infrastructures ? You may fix the scenario, but it won’t fix the already-existing instances. If no instance has been deployed, it is fine: fixing the scenario is sufficient. Once instances has been deployed, we require a mecanism to perform this update automatically.

A reflex when dealing with a question of updates and deliveries is to refer to The Update Framework. With the embodiement of its principles, we wanted to provide macro models for hot update mecanisms. To do this, we listed a bunch of deployment strategies, analysed their inner concepts and group them on this.

| Precise model | Applicable | Macro model |

|---|---|---|

| Blue-Green deployment | ✅ | Blue-Green |

| Canary deployment | ✅ | |

| Rolling deployment | ✅ | |

| A/B testing | ✅ | |

| Shadow deployment | ✅ | |

| Red-Black deployment | ✅ | |

| Highlander deployment | ✅ | Recreate |

| Recreate deployment | ✅ | |

| Update-in-place deployment | ✅ | Update-in-place |

| Immutable infrastructure | ❌ | |

| Feature toggles | ❌ | |

| Dark launches | ❌ | |

| Ramped deployment | ❌ | |

| Serverless deployment | ❌ | |

| Multi-cloud deployment | ❌ |

Strategies were classified not applicable when they did not include update mecanisms.

With the 3 macro models, we define 3 hot update strategies.

Blue-Green

The blue-green update strategy starts a new instance with the new scenario, and once it is done shuts down the old one.

It requires both instances in parallel, thus is a resources-consuming update strategy. Nevertheless, it reduces services interruptions to low or none. To the extreme, the infrastructure should be able to handle two times the instances load.

sequenceDiagram

Upstream ->>+ API: Request

API ->>+ New Instance: Start instance

New Instance ->>- API: Instance up & running

API ->>+ Old Instance: Stop instance

Old Instance ->>- API: Done

API ->>- Upstream: Respond new instance info

Recreate

The recreate update strategy shuts down the old one, then starts a new instance with the new scenario.

It is a resources-saving update strategy, but imply services interruptions enough time to stop the old instance and starts a new one. To the extreme, it does not require additional resources more than one time the instance load.

sequenceDiagram

Upstream ->>+ API: Request

API ->>+ Old Instance: Stop instance

Old Instance ->>- API: Done

API ->>+ New Instance: Start instance

New Instance ->>- API: Instance up & running

API ->>- Upstream: Respond refreshed instance info

Update-in-place

The update-in-place update strategy loads the new scenario and update resources in live.

It is a resource-saving update strategy, that imply low to none services interruptions, but require robustness in the update mecanisms. If the update mecanisms are not robust, we do not recommend this one as it could soft-lock resources in the providers. To the extreme, it does not require additional resources more than one time the instance load.

sequenceDiagram

Upstream ->>+ API: Request

API ->>+ Instance: Update instance

Instance ->>- API: Refreshed instance

API ->>- Upstream: Respond refreshed instance info

Overall

| Update Strategy | Require Robustness¹ | Time efficiency | Cost efficiency | Availability | TL;DR; |

|---|---|---|---|---|---|

| Update in place | ✅ | ✅ | ✅ | ✅ | Efficient in time & cost ; require high maturity |

| Blue-Green | ❌ | ✅ | ❌ | ✅ | Efficient in time ; costfull |

| Recreate | ❌ | ❌ | ✅ | ❌ | Efficient in cost ; time consuming |

¹ Robustness of both the provider and resources updates.

What’s next ?

How did we incorporate security in such a powerfull service ? Find answers in Security.

1.4.7 - Security

The problem with the genericity of chall-manager resides in its capacity to execute any Golang code as long as it fits in a Pulumi stack i.e. anything. For this reason, there are multiple concerns to address when using the chall-manager.

Nevertheless, it also provides actionable responses to security concerns, such as shareflag and bias.

Authentication & Authorization

A question regarding such a security concern of an “RCE-as-a-Service” system is to throw authentication and authorization at it. Technically, it could fit and get justified.

Nevertheless, we think that, first of all, chall-manager replicas should not be exposed to end users and untrusted services thus Ops should put mTLS in place between trusted services and restraint communications to the bare minimum, and secondly, the Separation of Concerns Principle imply authentication and authorization are another goal thus should be achieved by another service.

Finally, authentication and authorization may but justifiable if Chall-Manager was operated as a Service. As this would not be the case with a Community Edition, we consider it out of scope.

Kubernetes

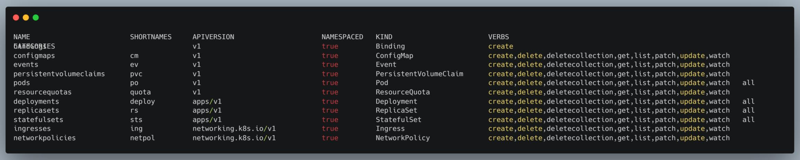

If deployed as part of a Kubernetes cluster, with a ServiceAccount and a specific namespace to deploy instances, the chall-manager is able to mutate the architecture on the fly. To minimize the effect of such mutations, we recommend you provide this ServiceAccount a Role with a limited set of verbs on api groups. Those resources should only be namespaced.

To build this Role for your needs, you can use the command kubectl api-resources –-namespaced=true –o wide to visualize a cluster resources and applicable verbs.

An extract of a the resources of a Kubernetes cluster and their applicable verbs.

More details on Kubernetes RBAC.

Shareflag

One of the actionable response provided by the chall-manager is through an anti-shareflag mecanism.

Each instance deployed by the chall-manager can return in its scenario a specific flag. This flag will then be used by the upstream CTF platform to ensure the source -and only it- found the solution.

Moreover, each instance-specific flag could be derived from an original constant one using the flag variation engine.

ChallOps bias

As each instance is an infrastructure in itself, variations could bias them: lighter network policies, easier brute force, etc. A scenario is not biased by essence.

If we make a risk analysis on the chall-manager capabilities and possibilities for an event, we have to consider a biased ChallMaker or Ops that produces uneven-balanced scenarios.

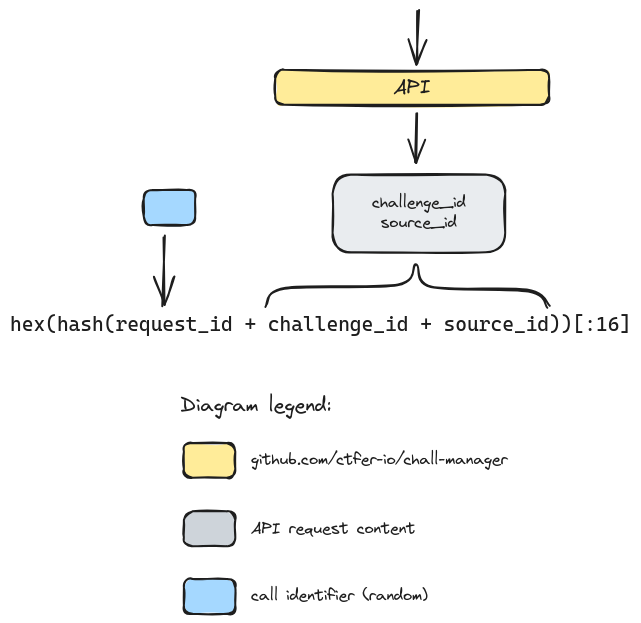

For this reason, the chall-manager API does not expose the source identifier of the request to the scenario code, but an identity. This is declined as follows. It strictly identifies an infrastructure identifier, the challenge the instance was requested from, and the source identifier for this instance.

A visualization of how views are split apart to avoid the ChallOps bias.

Notice the identity is limited to 16 hexadecimals, making it compatible to multiple uses like a DNS name or a PRNG seed. This increases the possibilites of collisions, but can still cover \(16^{16} = 18.446.744.073.709.551.616\) combinations, trusted sufficient for a CTF (\(f(x,y) = x \times y - 16^{16}\), find roots: \(x \times y=16^{16} \Leftrightarrow y=\frac{16^{16}}{x}\) so roots are given by the couple \((x, \frac{16^{16}}{x})\) with \(x\) the number of challenges. With largely enough challenges e.g. 200, there is still place for \(\frac{16^{16}}{200} \simeq 9.2 \times 10^{16}\) instances each).

What’s next ?

Learn how we dealt with the resources expirations.

1.4.8 - Expiration

Context

During the CTF, we don’t want players to be capable of manipulating the infrastructure at their will: starting instances are costful, require computational capabilities, etc. It is mandatory to control this while providing the players the power to manipulate their instances at their own will.

For this reason, one goal of the chall-manager is to provide ephemeral (or not) scenarios. Ephemeral imply lifetimes, expirations and deletions.

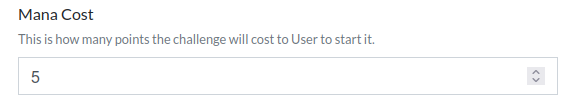

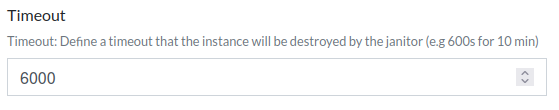

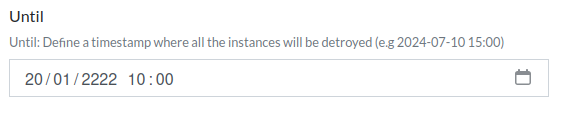

To implement this, for each Challenge the ChallMaker and Ops can set a timeout in seconds after which the Instance will be deleted once up & running, or an until date after which the instance will be deleted whatever the timeout. When an Instance is deployed, its start date is saved, and every update is stored for traceability. A participant (or a dependent service) can then renew an instance on demand for additional time, as long as it is under the until date of the challenge. This is based on a hypothesis that a challenge should be solved after \(n\) minutes.

Note

The timeout should be evaluated based on expert’s point of view regarding the complexity of the conceived challenge, with a consideration of the participant skill sets (an expert can be expected to solve an introduction challenge in seconds, while a beginer can take several minutes).

There is no “rule of the thumb”, but we recommend double-testing the challenge by both a domain-expert for technical difficulty and another ChallMaker unrelated to this domain.

Deleting instances when outdated then becomes a new goal of the system, thus we cannot extend the chall-manager as it would be a rupture of the Separation of Concerns Principle: it is the goal of another service, chall-manager-janitor. This is also justified by the frequency model applied to the janitor, which is unrelated to the chall-manager service itself.

With such approach, other players could use the resources. Nevertheless, it requires a mecanism to wipe out infrastructure resources after a given time.

Some tools exist to do so.

| Tool | Environment |

|---|---|

| hjacobs/kube-janitor | Kubernetes |

| kubernetes-sig/boskos | Kubernetes |

| rancher/aws-janitor | AWS |

Despite tools exist, they are context-specifics thus are limited: each one has its own mecanism and only 1 environment is considered. As of genericity, we want a generic approach able to handle all ecosystems without the need for specific implementations. For instance, if a ChallMaker decides to cover a unique, private and offline ecosystem, how could (s)he do ?

That is why the janitor must have the same level of genericity as chall-manager itself. Despite it is not optimal for specifics providers, we except this genericity to be a better tradeoff than covering a limited set of technologies. This modular approach enable covering new providers (vendor-specifics, public or private) without involving CTFer.io in the loop.

How it works

By using the chall-manager API, the janitor looks up at expiration dates.

Once an instance is expired, it simply deletes it.

Using a cron, the janitor could then monitor the instances frequently.

flowchart LR

subgraph Chall-Manager

CM[Chall-Manager]

Etcd

CM --> Etcd

end

CMJ[Chall-Manager-Janitor]

CMJ -->|gRPC| CM

If two janitors triggers in parallel, the API will maintain consistency. Errors code are to expect, but no data inconsistency.

As it does not plugs into a specific provider mecanism nor requirement, it guarantees platform agnosticity. Whatever the scenario, the chall-manager-janitor will be able to handle it.

Follows the algorithm used to determine the instance until date based on a challenge configuration for both until and timeout.

Renewing an instance re-execute this to ensure consistency with the challenge configuration.

Based on the instance until date, the janitor will determine whether to delete it or not (\(instance.until > now() \Rightarrow delete(instance)\)).

flowchart LR

Start[Compute until]

Start-->until{"until == nil ?"}

until---|true|timeout1{"timeout == nil ?"}

timeout1---|true|out1["nil"]

timeout1---|false|out2["now()+timeout"]

until---|false|timeout2{"timeout == nil ?"}

timeout2---|true|out3{"until"}

timeout2---|false|out4{"min(now()+timeout,until)"}

What’s next ?

Listening to the community, we decided to improve further with a Software Development Kit.

1.4.9 - Software Development Kit

A first comment on chall-manager was that it required ChallMaker and Ops to be DevOps. Indeed, if we expect people to be providers’ experts to deploy a challenge, when there expertise is on a cybersecurity aspect… well, it is incoherent.

To avoid this, we took a few steps back and asked ourselves: for a beginner, what are the deployment practices that could arise form the use of chall-manager ?

A naive approach was to consider the deployment of a single Docker container in a Cloud provider (Kubernetes, GCP, AWS, etc.). For this reason, we implemented the minimal requirements to effectively deploy a Docker container in a Kubernetes cluster, exposed through an Ingress or a NodePort. The results were hundreds-line-long, so confirmed we cannot expect non-professionnals to do it.

Based on this experiment, we decided to reuse this Pulumi scenario to build a Software Development Kit to empower the ChallMaker. The references architectures contained in the SDK are available here. The rule of thumb with them is to infer the most possible things, to have a mimimum configuration for the end user.

Other features are available in the SDK.

Flag variation engine

Commonly, each challenge has its own flag. This suffers a big limitation that we can come up to: as each instance is specific to a source, we can define the flag on the fly. But this flag must not be shared with other players or it will enable shareflag.

For this reason, we provide the ability to mutate a string (expected to be the flag): for each character, if there is a variant in the ASCII-extended charset, select one of them randomly and based on the identity.

Variation rules

The variation rules follows, and if a character is not part of it, it is not mutated (each variant has its mutations evenly distributed):

a,A,4,@,ª,À,Á,Â,Ã,Ä,Å,à,á,â,ã,ä,åb,B,8,ßc,C,(,¢,©,Ç,çd,D,Ðe,E,€,&,£,È,É,Ê,Ë,è,é,ê,ë,3f,F,ƒg,Gh,H,#i,I,1,!,Ì,Í,Î,Ï,ì,í,î,ïj,Jk,Kl,Lm,Mn,N,Ñ,ño,O,0,¤,°,º,Ò,Ó,Ô,Õ,Ö,Ø,ø,ò,ó,ô,õ,ö,ðp,Pq,Qr,R,®s,S,5,$,š,Š,§t,T,7,†u,U,µ,Ù,Ú,Û,Ü,ù,ú,û,üv,Vw,Wx,X,×y,Y,Ÿ,¥,Ý,ý,ÿz,Z,ž,Ž-,_,~

Tips & Tricks

If you want to use a decorator (e.g.BREFCTF{...}), do not put it in the flag to variate. More info here.

Limitations

We are aware that this proposition does not solve all issues: if people share their write-up, they will be able to flag. This limitation is considered out of our scope, as we don’t think the Challenge on Demand solution fits this use case.

Nevertheless, our differentiation strategy can be the basis of a proper solution to the APG-problem (Automatic Program Generation): we are able to write one scenario that will differentiate the instances per source. This could fit the input of an APG-solution.

Moreover, it considers a precise scenario of advanced malicious collaborative sources, where shareflag consider malicious collaborative sources only (more “accessible” by definition).

What’s next ?

The final step from there is to ensure the quality of our work, with testing.

1.4.10 - Testing

Generalities

In the goal of asserting the quality of a software, testing becomes a powerful tool: it provides trust in a codebase. But testing, in itself, is a whole domain of IT. Some common requirements are:

- having those tests written with a programmation language (most often the same one as the software, enable technical skills sharing among Software Engineers)

- documented (the high-level strategy should be auditable to quickly assess quality practices)

- reproducible (could be run in two distinct environments and produce the same results)

- explainable (each test case sould document its goal(s))

- systematically run, or if not possible, as frequent as possible (detect regressions as soon as possible)

To fulfill those requirements, the strategy can contain many phases which each focused on a specific aspect or condition of the software under tests.

In the following, we provide the high-level testing strategy of the chall-manager Micro Service.

Software Engineers are invited to read it as such strategy is rare: we challenged the practices to push them beyond what the community does, with Romeo.

Testing strategy

The testing strategy contains multiple phases:

- unit tests to ensure core functions behave as expected ; those does not depend on network, os, files, etc. out thus the code and only it.

- functional tests to ensure the system behaves as expected ; those require the system to run hence a place to do so.

- integration tests to ensure the system is integrated properly given a set of targets ; those require whether the production environment or a clone of it.

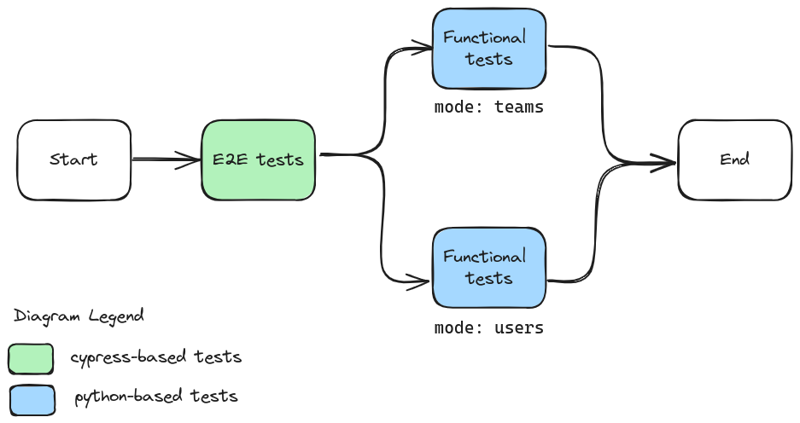

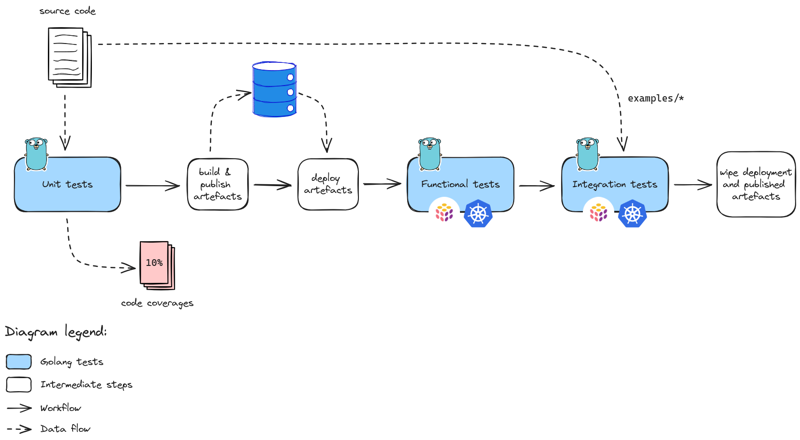

The chall-manager testing strategy.

Additional Quality Assurance steps could be found, like Stress Tests to assess Service Level Objectives under high-load conditions.

In the case of chall-manager, we write both the code and tests in Golang. To deploy infrastructures for tests, we use the Pulumi automation API and on-premise Kubernetes cluster (built using Lab and L3).

Unit tests

The unit tests revolve around the code and are isolated from anything else: no network, no files, no port listening, other tests, etc. This provides them the capacity to get run on every machine and always produce the same results: idempotence.

As chall-manager is mostly a wrapper around the Pulumi automation API to deploy scenarios, it could not be much tested using this step. Code coverage could barely reach \(10\%\) thus confidence is not sufficient.

Convention is to prefix these tests Test_U_ hence could be run using go test ./... -run=^Test_U_ -v.

They most often have the same structure, based on Table-Driven Testing (TDT). Some diverge to fit specific needs (e.g. no regression on an issue related to a state machine).

package xxx_test

import (

"testing"

"github.com/stretchr/testify/assert"

)

func Test_U_XXX(t *testing.T) {

t.Parallel()

var tests = map[string]struct{

// ... inputs, expected outputs

}{

// ... test cases each named uniquely

// ... fuzz crashers if applicable, named "Fuzz_<id>" and a description of why it crashed

}

for testname, tt := range tests {

t.Run(testname, func(t *testing.T) {

assert := assert.New(t)

// ... the test body

// ... assert expected outputs

})

}

}

Functional tests

The functional tests revolve around the system during execution, and tests behaviors of services (e.g. state machines). Those should be reproducible, but some unexpected behaviors could arise: network interruptions, no disk available, etc. but should not be considered as the first source of a failing test (the underlying infrastructure is most often large enough to run the test, without interruption).

Convention is to prefix these tests Test_F_ hence could be run using go test ./... -run=^Test_F_ -v.

They require a Docker image (build artifact) to be built and pushed to a registry. For Monitoring purposes, the chall-manager binary is built with the -cover flag to instrument the Go binary such that it exports its coverage data to filesystem.

As they require a Kubernetes cluster to run, you must define the environment variable K8S_BASE with the DNS base URL to reach this cluster.

Cluster should fit the requirements for deployment.

Their structure depends on what needs to be tested, but follows TDT approach if applicable. Deployment is performed using the Pulumi factory.

package xxx_test

import (

"os"

"path"

"testing"

"github.com/stretchr/testify/assert"

)

func Test_F_XXX(t *testing.T) {

// ... a description of what is the goal of this test: inputs, outputs, behaviors

cwd, _ := os.Getwd()

integration.ProgramTest(t, &integration.ProgramTestOptions{

Quick: true,

SkipRefresh: true,

Dir: path.Join(cwd, ".."), // target the "deploy" directory at the root of the repository

Config: map[string]string{

// ... more configuration

},

ExtraRuntimeValidation: func(t *testing.T, stack integration.RuntimeValidationStackInfo) {

// If TDT, do it here

assert := assert.New(t)

// ... the test body

// ... assert expected outputs

},

})

}

Integration tests

The integration tests revolve around the use of the system in the production environment (or most often a clone of it, to ensure no service interruptions on the production in case of a sudden outage). In the case of chall-manager, we ensure the examples can be launched by the chall-manager. This requires us the use of multiple providers, thus a specific configuration (to sum it up, more secrets than the functional tests).

Convention is to prefix these tests Test_I_ hence could be run using go test ./... -run=^Test_I_ -v.

They require a Docker image (build artifact) to be built and pushed to a registry. For Monitoring purposes, the chall-manager binary is built with the -cover flag to instrument the Go binary such that it exports its coverage data to filesystem.

As they require a Kubernetes cluster to run, you must define the environment variable K8S_BASE with the DNS base URL to reach this cluster.

Cluster should fit the requirements for deployment.

Their structure depends on what needs to be tested, but follows TDT approach if applicable. Deployment is performed using the Pulumi factory.

package xxx_test

import (

"os"

"path"

"testing"

"github.com/stretchr/testify/assert"

)

func Test_F_XXX(t *testing.T) {

// ... a description of what is the goal of this test: inputs, outputs, behaviors

cwd, _ := os.Getwd()

integration.ProgramTest(t, &integration.ProgramTestOptions{

Quick: true,

SkipRefresh: true,

Dir: path.Join(cwd, ".."), // target the "deploy" directory at the root of the repository

Config: map[string]string{

// ... configuration

},

ExtraRuntimeValidation: func(t *testing.T, stack integration.RuntimeValidationStackInfo) {

// If TDT, do it here

assert := assert.New(t)

// ... the test body

// ... assert expected outputs

},

})

}

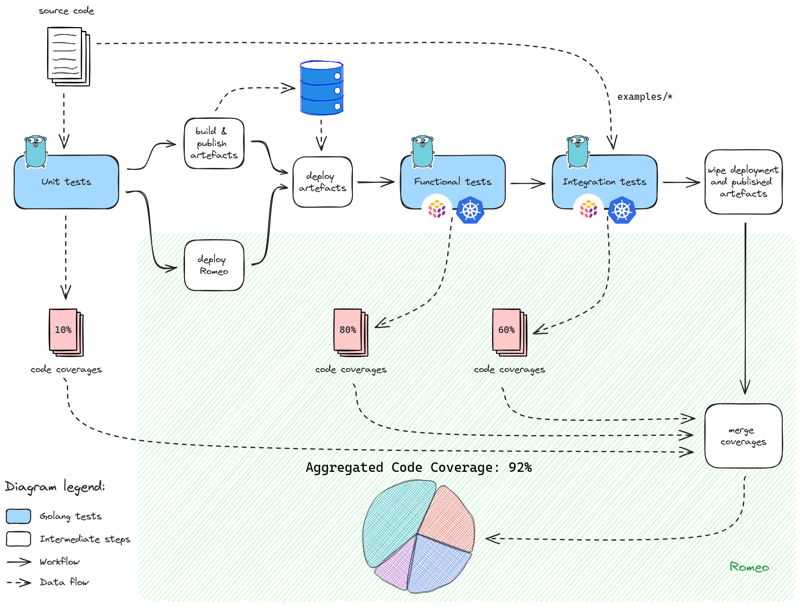

Monitoring coverages

Beyond testing for Quality Assurance, we also want to monitor what portion of the code is actually tested. This helps Software Development and Quality Assurance engineers to pilot where to focus the efforts, and in the case of chall-manager, what conditions where not covered at all during the whole process (e.g. an API method of a Service, or a common error).

By monitoring it and through public display, we challenge ourselves to improve to an acceptable level (e.g. \(\ge 85.00\%\)).

Disclaimer

Do not run after coverages: \(100\%\) code coverage imply no room for changes, and could be a burden to develop, run and maintain.

What you must cover are the major and minor functionalities, not all possible node in the Control Flow Graph. A good way to start this is by writing the tests by only looking at the models definition files (contracts, types, documentation).